$ID = get_the_ID();

add_post_meta($ID, 'Author', 'L. Peliti', True);

add_post_meta($ID, 'Credits', '2', True);

$AUTHOR = get_post_meta(get_the_ID(),'Author',True);

$CREDITS = get_post_meta(get_the_ID(),'Credits',True);

echo "

by $AUTHOR ($CREDITS credits)

“;

?>

Starting date: Friday, 20 April 2018

When: all fridays, 11:00-13:00

Where: SISSA, First Floor, Room 138

Number of lectures: 6

Programme

1. Motivation:

- Entropy and information, from Boltzmann to Gibbs to Shannon

- Information devices as non-equilibrium statistical systems

2. Stochastic thermodynamics:

- Basic structure

- Relation with ordinary thermodynamics and statistical mechanics

3. Prerequisites:

- Equilibrium thermodynamics

- Equilibrium statistical mechanics

- Basics of information theory:

– Shannon entropy

– Kullback-Leibler divergence

– Mutual and conditional information - Basics of large-deviation theory

4. Basic concepts of stochastic thermodynamics:

- Systems in stochastic thermodynamics

- Work and heat in stochastic thermodynamics

- Mesoscopic and calorimetric heat

- Fluctuating entropy

- Multiple baths

5. Fluctuation relations:

- Irreversibility and entropy production

- Fluctuation relations: Integral and detailed

- Jarzynski and Crooks relations and their use

- Hatano-Sasa relation

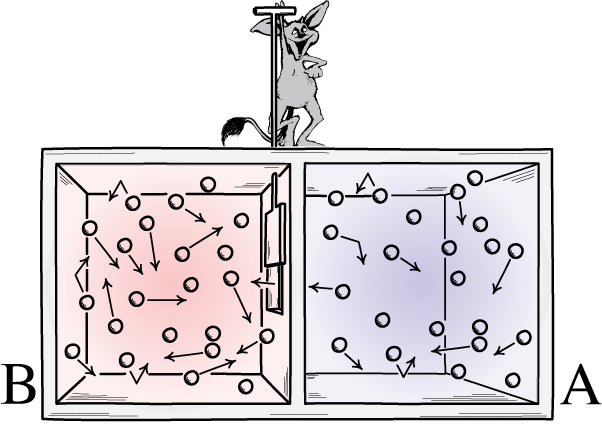

6. Thermodynamics of information:

- Thermodynamical computation systems

- Reversibility:

– In thermodynamics

– In computation

– In stochastic thermodynamics - Entropy balance in computation and feedback

- Speed-accuracy tradeoffs

7. Experimental Maxwell demons:

- Sagawa-Ueda-Sano Maxwell staircase

- Landauer principle

- Sensing

8. Ramifications:

- Analogy between work extraction, gambling and population dynamics

- Statistical physics of adaptation

- Historical (retrospective) fitness